Welcome back to Agentic Coding Weekly. Here are the updates on agentic coding tools, models, and workflows for the week of Mar 1-7, 2026.

Executive Summary:

GPT-5.4 launched with 1M token context window. Scores 57.7% on SWE-Bench Pro and 75.1% on Terminal-Bench 2.0.

Gemini 3.1 Flash-Lite released with a notable pricing increase.

Claude Code gets scheduled tasks via the

/loopskill. Session-scoped cron jobs for polling deployments, watching builds, or setting reminders.Voice mode rolling out in Claude Code to ~5% of users. Push-to-talk, transcription at cursor position, no extra token cost.

Worth reading: Simon Willison's agentic engineering patterns guide and the relicensing argument after AI-assisted codebase rewrite.

Meanwhile, if you feel addicted to Claude Code, checkout this HN discussion thread.

1. Tooling and Model Updates

GPT-5.4

OpenAI's latest release. Scores 57.7% on SWE-Bench Pro (Public), just above GPT-5.3 Codex (56.8%), and 75.1% on Terminal-Bench 2.0, slightly below GPT-5.3 Codex's 77.3%. For reference, Claude Opus 4.6 scored 65.4% on Terminal-Bench 2.0 and Gemini 3.1 Pro scored 68.5%.

Expanded context window is the major update. GPT-5.4 supports 1M tokens, up from 400k on GPT-5.2 and GPT-5.3 Codex. In Codex, requests exceeding 272k tokens in context count at double the normal rate.

Pricing increased to $2.50 / $15 per million input / output tokens compared to $1.75 / $14 on GPT-5.2.

Check the announcement and the system card.

Quick Updates

Google released Gemini 3.1 Flash-Lite. The pricing went from $0.10 / $0.40 (Gemini 2.5 Flash‑Lite) to $0.25 / $1.50 per million input / output tokens. In terms of capabilities, it beats Gemini 2.5 Flash (and obviously Flash-Lite) on most benchmarks.

Voice mode in Claude Code is rolling out to ~5% of users.

/voiceto toggle, hold space to talk, release to send. Works like push-to-talk and transcript gets inserted at the cursor position. There is no extra cost, voice transcription tokens don't count against rate limits.Claude Code now supports scheduled tasks. Built-in

/loopskill creates session-scoped cron jobs. Can be used to create polling tasks, build watchers, and one-time reminders right. More details in the Workflow section below.

2. Workflow of the Week

Claude Code now has session-scoped scheduled tasks. Basically cron jobs that run inside an active session. Useful for polling deployments, babysitting PRs, watching builds, or setting reminders.

Scheduled tasks only live as long as the Claude Code session. Once the terminal is closed, they're gone. Scheduled tasks also expire 3 days after creation.

/loop is a bundled skill. Give it an interval and a prompt:

/loop 5m check if the deployment finished and tell me what happened

Claude Code converts this to a cron job that fires in the background. Supported units for intervals are s for seconds, m for minutes, h for hours, and d for days. We can also skip the interval argument and it defaults to every 10 minutes. Can also chain with other commands:

/loop 20m /review-pr 1234

For one-shot reminders, plain English works:

in 45 minutes, check whether the integration tests passed

Claude schedules a single-fire task and deletes it after it runs.

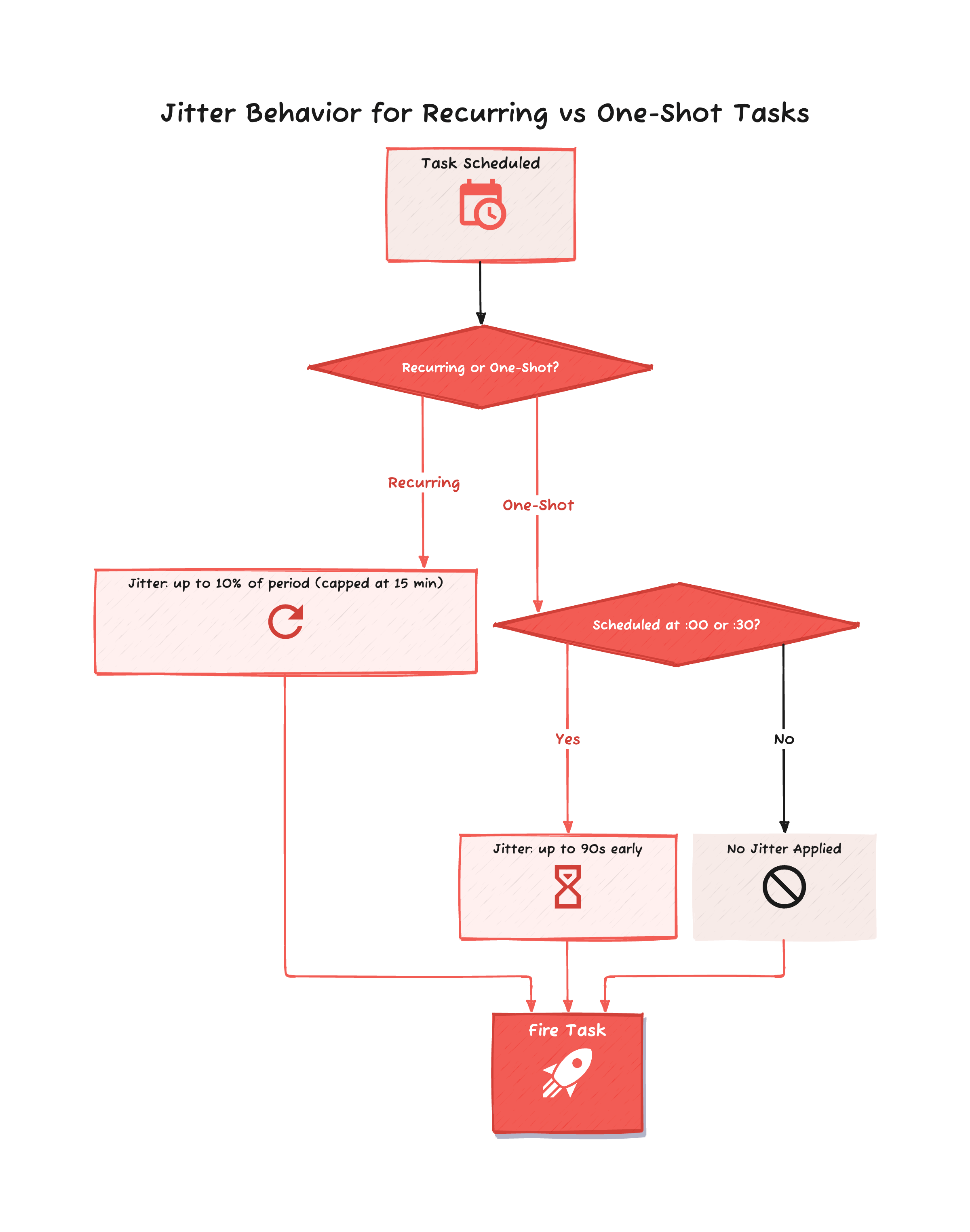

Also, to avoid every session hitting the Anthropic API at the same time, the scheduler adds a small jitter to fire times, so tasks don't execute exactly at the scheduled time.

To manage tasks, we can ask "what scheduled tasks do I have?" or "cancel the deploy check job" and Claude handles it.

To try it out, next time a long CI run or deployment kicks off, instead of tab-switching to check on it:

/loop 5m check the status of the CI pipeline on branch feature/auth and summarize any failures

Claude will interrupt when something needs attention.

3. Community Picks

Agentic Engineering Patterns

Simon Willison is putting together a collection of practices and patterns for getting the best out of agentic coding. He adds couple of new chapters every week. Go to the agentic engineering guide.

Relicensing with AI-Assisted Rewrite

Can AI produce clean room implementation?

The maintainers of the Python library chardet used Claude Code to perform a full codebase rewrite. The original code was a port of Mozilla's C++ chardet with an LGPL license, and the rewrite was relicensed to MIT. The original author considers this a license violation. This post is a breakdown of this event.

Filesystems Are Having a Moment

An argument for filesystems as the primary interface for agentic memory and interoperability. Read the post.

Your LLM Doesn't Write Correct Code. It Writes Plausible Code.

In a simple test of primary key lookup on 100 rows, SQLite took 0.09 ms but an LLM-generated Rust rewrite took 1,815.43 ms, which is about 20,000x slower.

Author argues this is due to sycophancy, LLMs produce outputs that match what the user wants to hear rather than what they need to hear.

That’s it for this week. I write this weekly on Mondays. If this was useful, subscribe below: