Welcome back to Agentic Coding Weekly. This week Leonard Lin shares his agentic coding setup, AGENTS.md configuration, and spec-drive development loop.

Here are the updates on agentic coding tools, models, and workflows for the week of Feb 15-21, 2026.

Executive Summary:

Anthropic released Claude Sonnet 4.6, matching Opus 4.5 capabilities on major benchmarks. 79.6% on SWE-bench Verified, 59.1% on Terminal-Bench 2.0.

Gemini 3.1 Pro released. First Google model to cross 80% on SWE-bench Verified (80.6%). 68.5% on Terminal-Bench 2.0.

Claude Code turns 1 tomorrow. Also got built-in git worktree support with

claude --worktree.Taalas prints LLM weights directly onto ASIC chips to bypass the Von Neumann bottleneck.

Meanwhile, “car wash” question is the new “how many r in strawberry”. The questions is: I want to wash my car. The car wash is 50 meters away. Should I walk or drive?

In my test, Gemini 3.1 Pro and Opus 4.6 answered correctly, while, Sonnet 4.6 and GPT-5.2-Codex told me to walk.

Gemini 3.1 Pro, Sonnet 4.6, Opus 4.6, and GPT-5.2-Codex on the car wash question: “I want to wash my car. The car wash is 50 meters away. Should I walk or drive?”

1. Tooling and Model Updates

Claude Sonnet 4.6

Two weeks after Opus 4.6, Sonnet 4.6 is the latest release from Anthropic. Early testing found users preferred Sonnet 4.6 over Sonnet 4.5 roughly 70% of the time, and preferred it over Opus 4.5 59% of the time. Check the announcement.

Scores 79.6% on SWE-bench Verified and 59.1% on Terminal-Bench 2.0. For comparison, Sonnet 4.5 scored 77.2% and 51% respectively, and Opus 4.6 scores 80.8% and 65.4%. The benchmarks also put Sonnet 4.6 roughly on par with Opus 4.5.

Pricing remains the same as Sonnet 4.5 at $3/$15 per million input/output tokens.

Gemini 3.1 Pro

The first Google model to cross the 80% threshold on SWE-bench Verified. Google's latest release scores 80.6% on SWE-bench Verified and 68.5% on Terminal Bench 2.0. For comparison, Gemini 3 Pro scored 76.2% and 56.9% respectively.

Pricing unchanged from Gemini 3 Pro, $2/$12 per million input/output tokens when context is under 200k. Check the announcement.

Quick Updates

JetBrains published an Agent Skill that helps coding agents write modern Go code. Link to SKILL.md on GitHub.

Claude Code turns one year old tomorrow, February 24th. It originally launched alongside Claude 3.7 Sonnet.

Claude Code now has built-in git worktree support. Running

claude --worktreeorclaude -wcreates an isolated worktree with a randomly generated name and starts a session inside it. Check docs for more details.

2. Workflow of the Week

This week's workflow is from Leonard Lin, founder and CTO of Shisa.AI. Over to Leonard:

I've been closely tracking AI-assisted coding since the ChatGPT launch. My goal is to have an eye on the bleeding edge, but to not be caught up chasing every new thing. There's a lot of churn and FOMO in the space, but things also get "baked-in" very quickly, and it's important to remember, the point is to save time and effort. My goal is Pareto efficiency: see what is boiled-down post-hype, adopt what gives the best gains for my actual work (vs yak-shaving).

Big Picture

Opus 4.5 and GPT 5.2 were the biggest game changers for me and since their release, now do 99% of my code-writing. Before that, I was mostly still going through most of my code (e.g., except for one-off scripts I didn't care about), but I've now switched mainly to enforcing quality through specs and test coverage even for long-lived projects (the latest models can spelunk and parse code better than I can).

My Current Setup

This is in a state of flux as new scaffolding comes out and I've been poking at new scaffolds and control planes, and I fully expect my setup to look quite different in 6 months, but my main setup currently is byobu with aggressive F8 pane labeling:

One byobu session per project

Instead of subagents/swarms, I aggressively create new panes anytime I need

Every pane gets descriptively named (e.g., coder-5.3, planner-claude, etc.)

I tend to use a single main coder per worktree but have multiple planners or reviewers

I mostly use Codex and Claude Code, but use some Amp and OpenCode as well. I've tried some mobile/web prompting flows (Happy, etc) but generally byobu (tmux) via Tailscale is how I manage everything. This lets me run my agents and access them remotely when I want, and is an easy adaptation of the same sort of workflow I've used for years (but with AI agents coding).

My general preference is to run the strongest models available. As of my latest testing, GPT-5.3 Codex xhigh is the strongest general coder for my workload, and I run Opus 4.6 for writing, planning and more interactive flows and also use GPT-5.2 xhigh for planning, review and deep work (leaving it alone for minutes/hours).

I have a custom ccstatusline and use ccusage and @ccusage/codex for some stats tracking as well, but besides that am pretty vanilla on my plugins/addons.

Workflow

Historically I've leaned heavily into using Deep Research for research, but I've increasingly been migrating those ad-hoc queries into git repos/agentic coding tools for rapid iteration. Putting everything into agent-accessible markdown files is a huge multiplier.

As mentioned, I don't trick out my setup. There's a whole cottage industry of Claude Code plugins, custom skills, and meta-frameworks, and while I keep a repo/doc tracking and analyzing the major ones like superpowers, get-shit-done, etc., a well-written AGENTS.md seems to cover most of my needs.

My AGENTS.md (and symlinked CLAUDE.md) is where the core of my current process lives. Each project has a custom AGENTS.md that I've been evolving. Versions are shared with the rest of my (human) team as well. Despite having boiled down my best practices, my AGENTS.md is not super long - currently about 250 lines.

The most important bits in my AGENTS.md:

Points to

README.mdanddocs/locations and dev environmentSummarizes the project and project goals, design principles

Has a structure/treemap of the key folders

Lists the development philosophy and my preferred dev loop

Outlines roles/lanes for different agents

Specifies what documentation, tests, git commit practices, and other practical bits I want

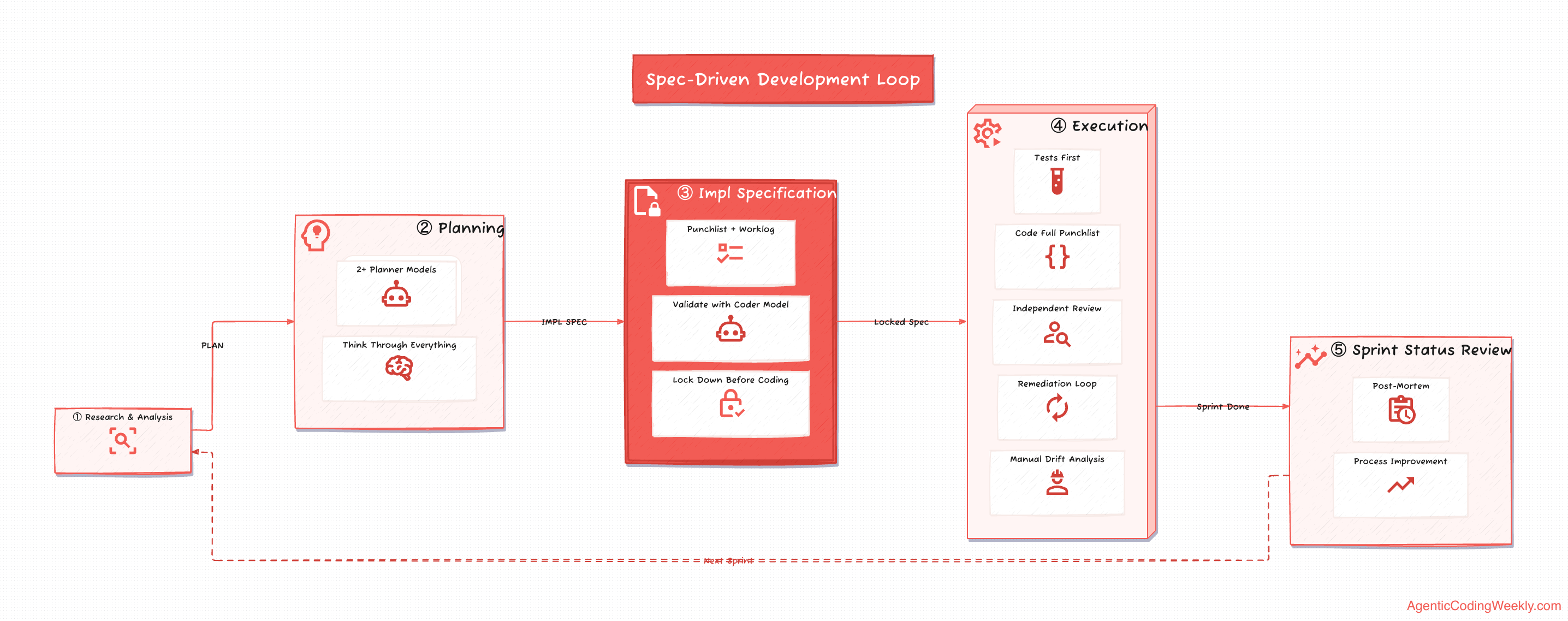

Most of this is self-explanatory, but the most important part is probably my spec-driven development loop, especially the independent review loop.

IMO, spec drift is where most people get in trouble with AI-assisted coding, and process is mostly about helping to keep you and your agents out of trouble on that front:

RESEARCH and ANALYSIS are done before a PLAN is created.

After the PLAN is ready, an IMPLEMENTATION (punchlist+worklog) is created - this is the core control point in my process and every major decision is made here. I usually work with at least 2

plannermodels to think through everything, and then work with thecodermodel to make sure that the IMPLEMENTATION is crystal clear for that model.Once IMPLEMENTATION is locked down, the

coderis able to autonomously knock out all the milestones in EXECUTION:Test-driven: test coverage is required before code is written

Coding: write a first pass, go through the entire punchlist, give a summary for reviewers

Review: I found using independent reviewers to be very important. All issues are gathered up and sent to the

coderto fixRemediation: the

codergoes through and fixes the reviews, and it's sent back from review (repeat this part until everything is green)Analysis: I do a post/pre-analysis at each milestone to correct for any implementation drift, scoping, or decision-making

At the end of each sprint, I have started doing a STATUS review that's basically a post-mortem, implementation review, etc. that's useful for both me and for improving the process in the future

Ad-hoc development still largely adheres to this loop!

Despite basically not touching the code anymore, I feel like I still have a decent grip of what's being generated and I'm currently still very hands-on with managing my agents on coding sprints. The amount of engineering-effort from my side probably remains the same, but features (and new business lines) land much more quickly. While some people have adopted more chaotic flows, for now, I still try to keep the development as intentional as possible.

I also have an explicit "meta" rule at the end of my AGENTS.md:

## Meta: Evolving This File

This AGENTS.md is a living document. Update it when:

- You discover a workflow pattern that helps

- Something caused confusion

- A new tool or process gets introduced

- You learn something that would help the next person

Keep changes focused on process/behavior, not project-specific details (those go in docs/).In practice though, my post-sprint analysis is where I'll review with my agents to see what actually needs to be changed or updated in the AGENTS.md.

30-Day Stats

Over the past 30 days, I've clocked in a pretty large amount of token usage, although 90-95% of those are cached tokens. I subscribe to ChatGPT Pro, and even at relatively high token usage, I have not been hitting any usage limits (the $200/mo is worth it). The Claude models I use via Bedrock and Vertex.

Provider | Tokens/mo | API Cost/mo |

|---|---|---|

Claude API | 1.3B | $1,211 |

OpenAI API | 7.6B | $2,153 |

What are these tokens used for? Over the past month, I've had multiple projects baking, including one optimizing GPU kernels, a new custom model training framework, multiple new evals, papers and blog posts and grant proposals, several training research plans, and also, most recently, two new 30K+ LOC greenfield projects.

— Leonard

3. Community Picks

How Taalas "Prints" LLM onto a Chip?

A startup called Taalas recently released an ASIC chip running Llama 3.1 8B (3/6 bit quant) at 17,000 tokens per second. This post concisely explains why GPUs have limits and how the Taalas chip works. Read the post.

GPU communicates back and forth between the VRAM (holds model weights and results) and the compute core (performs matrix multiplication) and thus are limited by the memory bandwidth. Taalas chip turns the model's weights into physical transistors so (mostly) they don't need to load anything from memory to process the input.

Building for an Audience of One

Author with no experience in Zig or X11 internals used Claude and Gemini to build a high-performance custom task switcher for KDE.

AI tools enable you to build things that you would have never built otherwise. When you just want "the thing" rather than the process of building the thing, coding agents can be a game changer.

The post also discusses creating detailed specifications with milestones, running Claude Code in dev containers to balance between approving every command and YOLO mode, using other coding harnesses like Open Code, and the importance of having coding knowledge even when AI does the heavy lifting. Read the post and HN discussion.

4. Weekly Quiz

Subagents are specialized AI assistants that handle specific types of tasks. Claude Code has built-in subagents like Explore, Plan, and general-purpose. These five questions cover what you should know about subagents and creating custom ones.

Q1: What is the primary benefit of delegating a test suite run to a subagent instead of running it in your main Claude Code conversation?

A) The subagent can run tests in a sandboxed environment that prevents side effects

B) The subagent retries flaky tests automatically before reporting results

C) The subagent runs tests in parallel across multiple threads for faster execution

D) The verbose test output stays in the subagent's context, keeping your main conversation clean

Q2: In a subagent Markdown file, which YAML frontmatter fields are required?

A) name and description

B) name, description, and tools

C) name, description, and model

D) name, description, tools, and permissionMode

Q3: If you omit the tools field in a custom subagent's configuration. What happens?

A) The subagent gets no tools

B) The subagent gets only read-only tools as a safety default

C) The subagent inherits all tools available to the main conversation

D) The subagent must request tool access interactively each time it tries to use one

Q4: A subagent needs to validate that all Bash commands are read-only SQL queries. What's the recommended approach?

A) Remove Bash from the tools field and use a custom MCP server for database access instead

B) Set permissionMode: plan so the subagent operates in read-only mode

C) Write a PreToolUse hook that inspects the command and exits with code 2 to block disallowed operations

D) Put a strict "only SELECT queries" rule in the system prompt and rely on the model to comply

Q5: When should you prefer using the main conversation over delegating to a subagent?

A) When the task produces a large volume of output that needs to be referenced later

B) When you need frequent back-and-forth and iterative refinement with Claude

C) When the task is self-contained and can be summarized in a few sentences

D) When the task should run in the background while other work continues

That’s it for this week. I write this weekly on Mondays. If this was useful, subscribe below: